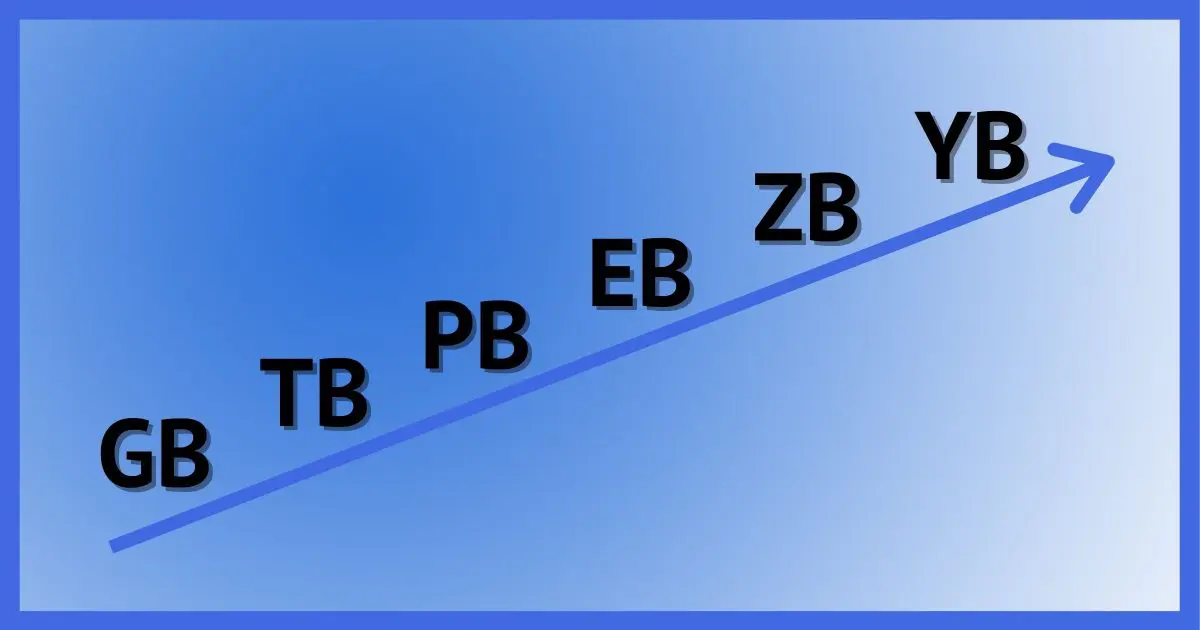

After terabytes comes petabytes — and then more and more bytes.

The only unchanging thing in the computer industry is change itself. Today’s topic is storage: specifically, how much and what we call it.

Let’s review some size-related and speed-related terms for good measure.

What comes after terabytes?

The various terms relating to bytes are measurements of storage. Kilobyte, megabyte, gigabyte, terabyte, petabyte, exabyte, zettabyte, and yottabyte are terms used to represent increasing powers of 1024. Hertz and FLOPS are two different measurements of computing speed or power; they measure the input clock speed and ability to process floating point numbers, respectively.

Bytes, bytes, and more bytes

That a byte is equal to eight bits (where a bit is either a zero or one) is pretty obscure to most people, and honestly, not terribly important to general understanding.

A byte usually1 represents one character. The word “supercalifragilisticexpialidocious“, for example, takes 34 bytes of storage, one for each character. The Project Guttenberg plain-text version of The Bible takes up 5,218,805 bytes of storage.

A kilo-byte, or kilobyte, is 1024 bytes, “kilo” being the prefix for 1000. Computers like to think in powers of two, so we use 1024. That Bible file, then, is around five megabytes.

Each multiple of 1024 has its own term. (This will get controversial, as we’ll see in a moment.)

| Bytes | Common name | Magnitude | Relationship | Correct name |

| 1,024 | kilobyte (KB) | thousand | 1024 bytes (10241) | kibibyte |

| 1,048,576 | megabyte (MB) | million | 1024 kilobytes (10242) | mebibyte |

| 1,073,741,824 | gigabyte (GB) | billion | 1024 megabytes (10243) | gibibyte |

| 1,099,511,627,776 | terabyte (TB) | trillion | 1024 gigabytes (10244) | tebibyte |

| 1,125,899,906,842,624 | petabyte (PB) | quadrillion | 1024 terabytes (10245) | pebibyte |

| 1,152,921,504,606,846,976 | exabyte (EB) | quintillion | 1024 petabytes (10246) | exbibyte |

| 1,180,591,620,717,411,303,424 | zettabyte (ZB) | sextillion | 1024 exabytes (10247) | zebibyte |

| 1,208,925,819,614,629,174,706,176 | yottabyte (YB) | septillion | 1024 zettabytes (10248) | yobibyte |

What’s the difference between a “common name” and a “correct name”?

Here’s the controversy: the common name is the name we all tend to use. If you remember only one, remember this column.

Technically, however, these names refer specifically to powers of 1000, not powers of 1024, as you see listed above. Technically, a megabyte is exactly one million bytes, but we all use it to mean 1,048,576 bytes. The “correct name” column holds the actual proper name for the powers of 1024 names. 1048,576 bytes (1024 squared) is a mebibyte.

That’s a lot of bytes.

Help keep it going by becoming a Patron.

Bytes and disks

As of this writing, the largest drive I can find online for personal computer use is about 22TB (terabytes). That’s a traditional spinning media hard drive made by Seagate. The largest solid-state drive (SSD) is typically 2TB, but if you want to ramp up the price, often dramatically, there are larger SSDs. I’ve seen mention of a 100TB SSD that costs roughly $40,000.

There will probably always be larger drives in development. I speculate that the largest hard drives on the horizon are on the order of 100TB to 1PB, with SSDs in that same range or higher.

It’s worth noting that most data centers do not use the bleeding-edge largest drives available. They achieve their highest capacity by using smaller drives with a known track record — often many, many more.

So I’m not looking for zettabyte drives any time soon.

Of FLOPS and Hertz

Even though it’s unrelated to what I’ve been talking about so far — counting bytes — I want to address the other terms mentioned in the question: FLOPS and hertz.

FLOPS and hertz are two different ways to measure how quickly a CPU performs its functions. They measure two different things, neither of which is about the storage terms we explored above.

A hertz is the speed of a computer’s clock — think of it as a simple pulse running at a particular speed that drives the CPU’s operation. A 1GHz computer has a clock running at one gigahertz (or 1 billion hertz). That pulse happens one billion times a second, so in theory, the computer can perform one billion operations a second. In practice, this isn’t exactly true: some operations take more than one “clock cycle”, while certain processor-specific optimizations can allow more than one operation to happen simultaneously.

FLOP is short for Floating-Point Operation. FLOPS stands for Floating-Point Operations per Second.

CPUs operate best on integers. For example, that one-billion-operations-per-second I mentioned above could be adding two integers — whole numbers between 0 and 18,446,744,073,709,551,616 on a 64-bit processor2.

Floating point numbers are numbers with a decimal point. 3.141592653589, or Pi, is a floating-point number, as is 2.5, 100.3, and so on. Operations on floating-point numbers are more work. They are considered by some a better measure of computing power since they’re often used heavily in scientific work. An example of a floating-point operation would be adding or multiplying two floating-point numbers.

The “petaflop” you mentioned refers to a computer that can perform a quadrillion floating-point operations per second. That is very fast.

The design of different processors means that their ability to perform floating-point operations varies dramatically even if they’re running at the same clock speed in gigahertz. For example, high-resolution graphics, especially those used in real-time virtual reality and video-intensive gaming, make heavy use of these types of operations. As a result, graphics cards often have processors designed for high FLOPS.

The bottom line is that there is no FLOPS-to-gigahertz conversion. They measure two different types of a computer’s speed.

Do this

Now you have a better understanding of what words to expect to increase in popularity as our drives and storage get bigger.

And knowing that clock speed (GHz) and FLOPS are two different things, you can read much, much more about FLOPS and floating-point operations in the FLOPS Wikipedia article.

Subscribe to Confident Computing! Less frustration and more confidence, solutions, answers, and tips in your inbox every week.

Petaflop is technically incorrect. FLOPS means Floating Operations Per Second. So one floating point operation per second would be 1 FLOPS and a billion would be 1 Gigaflops.

And are petabytes a vegan food?

I believe there are megaflops on my computer, but not the good kind.

Megaflops can also be major box office disasters.